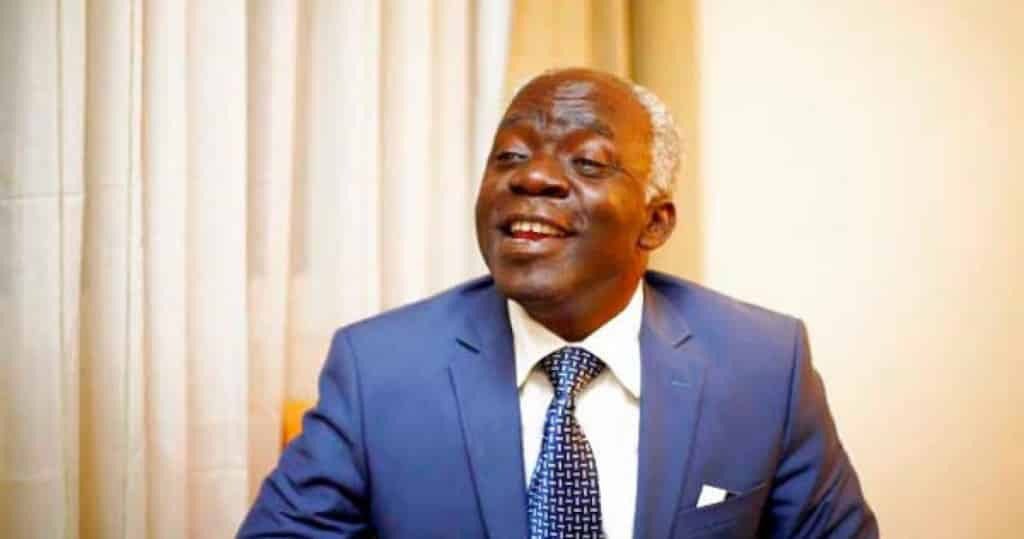

A landmark ruling by a Lagos State High Court has ordered Meta Platforms Inc, the parent company of Facebook and Instagram, to pay $25,000 in damages to human rights lawyer Femi Falana, SAN. The case, which centered on a deep-seated invasion of privacy and the spread of misinformation, has sparked intense debate among legal and tech experts regarding the future of digital expression in Nigeria.

The legal battle began in early 2025 after a video circulated on Facebook falsely claiming that Falana was battling a severe medical condition. While Meta argued it was simply a neutral host for user-generated content, the court disagreed. The presiding judge ruled that because Meta monetizes content and uses algorithms to control its distribution, it acts as a “joint data controller” rather than a passive conduit. This means the tech giant owes a direct duty of care to its users under the National Data Protection Act of 2023.

While the verdict is being hailed by some as a win for individual digital rights, several legal scholars have raised red flags. Privacy expert Gbenga Odugbemi argued that the court may have taken a “doctrinal shortcut.” He noted that issues involving reputation and false statements are traditionally handled through defamation or negligence laws, rather than privacy statutes. Applying privacy law as a “general-purpose substitute” for other legal grievances, he warned, could create an unsustainable framework for future cases.

Advocate Dirontsho Mohale, founder of Baakedi Professional Practice, also expressed concern over Meta being labeled a joint controller with the person who posted the video. Mohale warned that if this interpretation sticks, tech firms could face a daily barrage of lawsuits worldwide. There is a fear that this could set a precedent where “if you cannot find the speaker, you sue the microphone,” effectively making platforms responsible for every single post made by their billions of users.

The ripple effects for the average Nigerian internet user could be significant. Experts told Reports that to avoid multi-million dollar liabilities, platforms might resort to aggressive “over-moderation.” This could mean that perfectly legal speech—such as political satire, investigative journalism, or even heated social commentary—might be pre-emptively deleted by AI filters to save the companies from legal risks. Instead of a safer internet, users might find themselves facing a more censored one.

Furthermore, there is the economic impact on the local tech ecosystem. While a $25,000 fine is a drop in the bucket for a giant like Meta, such legal costs could easily bankrupt a rising African startup. If every social app is suddenly responsible for its users’ posts, the cost of doing business in Nigeria might become too high for new innovators. Meta has already hinted in the past that it could withdraw certain services if regulations become too restrictive, raising the stakes for the country’s digital economy.